The Lead

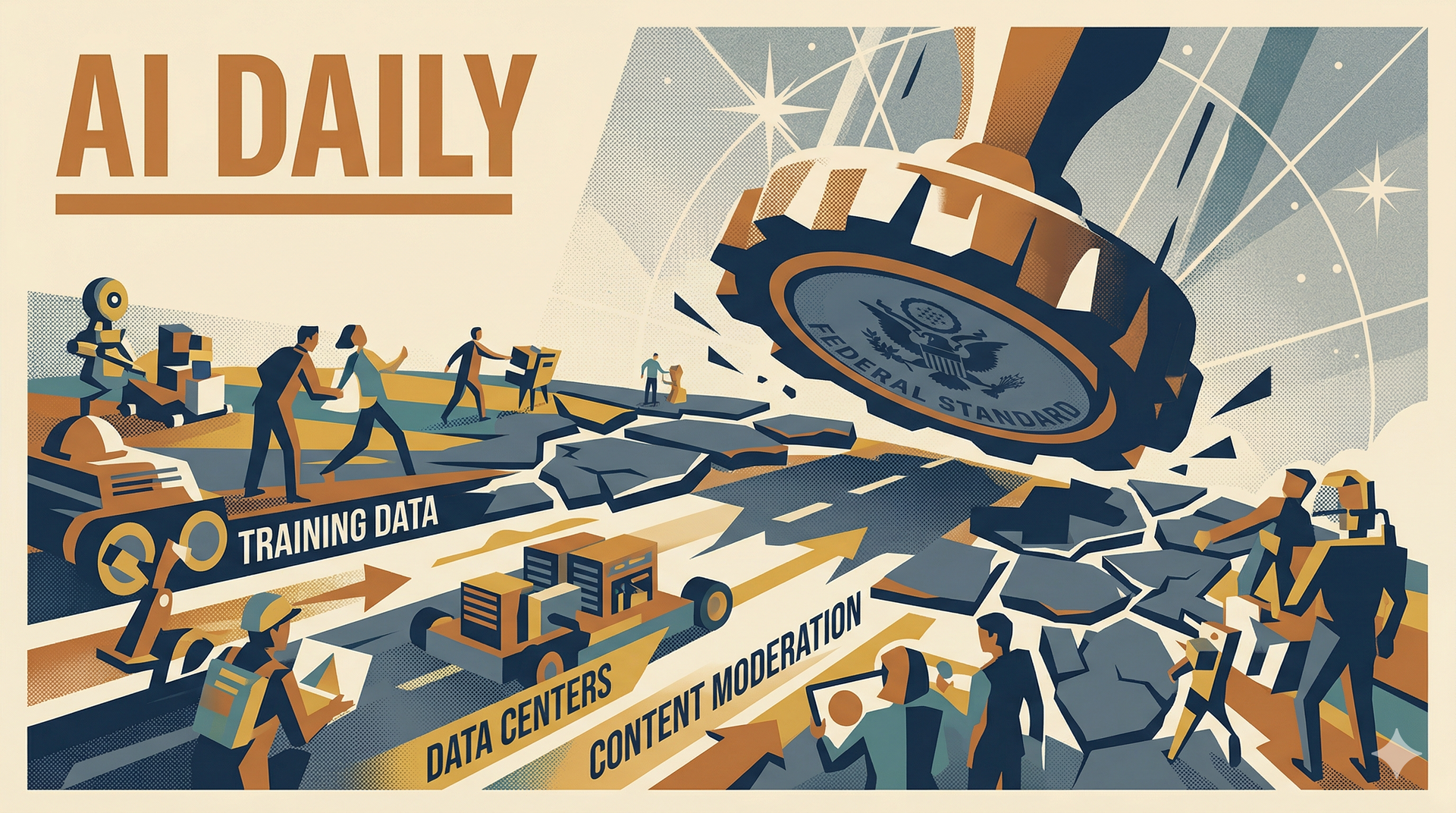

- The Trump administration released its National Policy Framework for Artificial Intelligence today, and the headline provision is a direct call for Congress to preempt state-level AI regulation.

- The framework explicitly targets what it calls the "patchwork" of state laws that have been multiplying since 2024, and asks for a single federal standard. It also takes a clear position on the copyright question that's been hanging over every AI lab: training on copyrighted material likely qualifies as fair use.

- Other pillars include streamlining data center permitting, protecting children from online harm, and preventing what the administration calls "AI censorship" of political speech.

The Deep Dive

If you run a company that deploys AI, this framework matters more than any model release this month.

Right now, building an AI-powered product that works nationally means navigating a growing maze of state-level requirements. California has disclosure mandates, Colorado passed its own accountability act, Illinois has biometric consent rules, New York City has its automated employment decision law, and Texas is working on its own version. Every new state law creates another compliance surface, and the costs fall hardest on smaller companies that can't afford a dedicated policy team for each jurisdiction.

The framework is asking Congress to replace all of that with a single federal standard. That's a massive simplification if it happens, and I think it's directionally right even though I have concerns about how the details get negotiated. The current trajectory of 50 different state AI laws is untenable for builders. It doesn't protect consumers better, it just makes deployment slower and more expensive, which advantages the companies big enough to absorb the legal overhead. A federal standard, if it's well-designed, levels that playing field.

The fair use provision is equally consequential. Every major AI lab trains on copyrighted material, and the legal ambiguity around whether that's permissible has been the single biggest unresolved liability in the industry. Britannica just joined the growing list of publishers suing OpenAI. BMG filed a copyright suit against Anthropic today. The White House is signaling that the executive branch views training as fair use, which doesn't settle the legal question (that's still headed to the courts), but it gives AI companies a stronger policy foundation while the cases play out.

I've been speaking about AI governance for well more almost two years now, and here's what I think people miss about frameworks like this: the details matter less than the signal. The signal is that the federal government wants to accelerate AI development, not constrain it. Whether you agree with that posture or not, if you're making deployment decisions right now, the regulatory risk just dropped meaningfully for companies building AI products in the U.S. The data center permitting provisions respond directly to the power constraints I've been covering all week. Morgan Stanley projected a 9-to-18 gigawatt shortfall through 2028. Streamlining permitting won't solve that overnight, but it's the policy lever that matters most.

One thing I want to flag: the "AI censorship" provision is going to be contentious. Meta announced today that it's rolling out AI systems to handle the bulk of content enforcement on Facebook and Instagram, reducing reliance on human moderators. The tension between "don't censor political speech" and "use AI to remove harmful content faster" is going to be one of the defining policy fights of the next two years, and if you're building AI moderation tools, you should be reading this framework line by line.

Also Worth Knowing

- Cursor shipped Composer 2 yesterday , and the open-source attribution drama is more interesting than the benchmarks. The model is priced at $0.50 per million input tokens, 86% cheaper than its predecessor, and outscored Claude Opus 4.6 on Terminal-Bench 2.0. But a developer caught the model ID routing to kimi-k2p5-rl-0317, revealing the base is Moonshot AI's Kimi K2.5. Moonshot's head of pretraining publicly called Cursor out, noting the tokenizer is identical. Kimi K2.5's license requires prominent attribution for products exceeding $20 million in monthly revenue or 100 million users, and Cursor almost certainly clears that bar at a $29.3 billion valuation. Yet they marketed Composer 2 as proprietary tech with no Kimi branding. I believe in the open-source flywheel, but this is the part that few people pay attention to: what happens when licensing terms actually bite? Ignoring attribution corrodes the trust that makes the ecosystem work.

- OpenAI announced it's acquiring Astral , the creators of the popular Python tools uv and Ruff, in a direct shot at Anthropic's developer ecosystem. Claude Code hit $1 billion in annualized revenue in six months. OpenAI's response is to buy the tools Python developers already depend on, then integrate them into Codex and a new desktop superapp that combines ChatGPT, Codex, and the Atlas browser into a single agentic workspace. The developer tooling war is now a three-way fight: Cursor (own model, own editor), Anthropic (Claude Code embedded everywhere), and OpenAI (acquire the tool chain). Each strategy tells you something different about where they think the value lives.

- Donald Knuth published a paper titled "Claude's Cycles" earlier this month after Claude Opus 4.6 solved an open graph theory conjecture he'd been stuck on for weeks. The 87-year-old father of algorithm analysis opened the paper with "Shock! Shock!" and closed by saying he'll have to reconsider his views on generative AI. Claude found the construction in about an hour; Knuth wrote the formal proof. Those are different contributions, and I don't want to overstate what happened. But when the most respected living computer scientist names a paper after an AI and calls the result "a dramatic advance in creative problem solving," the baseline for what these models can contribute to serious research just shifted.

The Builder's Take

This week clarified the landscape in ways that matter for anyone making real decisions.

The hardware story at GTC told us inference costs are collapsing. The a16z report told us AI is disappearing into existing tools. The bubble conversation told us spending is outrunning revenue and a repricing is coming. And today, the policy story told us the federal government is trying to clear the runway.

If you're a builder, the environment just got meaningfully friendlier. Lower inference costs. A policy framework pushing toward federal preemption. A fair use signal on training data. Permitting streamlining for infrastructure. The friction is coming down on almost every front.

But here's what I keep coming back to. The Cursor/Kimi drama is a perfect microcosm of where we actually are. The open-source ecosystem enabled a startup to build a competitive coding model for a fraction of frontier costs. That's genuinely good for builders. But skipping attribution to market someone else's base model as your own proprietary breakthrough poisons the well for everyone. Open source only works when the norms are respected.

The companies that win the next phase won't just have the best technology or the friendliest regulatory environment. They'll be the ones that do the work honestly: decompose real workflows, build genuine integration, respect the ecosystem they depend on, and close the 36-point gap between "using AI" and "mature AI deployment" that NVIDIA's own survey identified this week.

The runway is clear. The question is whether you're building something worth flying on it.

Keep building,

— JW